Everyone’s Asking About “Open-Claw Strategy”

But Nobody Even Has an AI Strategy Yet

I’m a huge fan of Jensen Huang and what NVIDIA has built. That’s not fluff. They’ve managed to reposition themselves from a hardware company into the gravitational center of modern computing. That doesn’t happen by accident.

And now they’re doing it again, this time with what’s being framed as their “Open-Claw” moment.

You can already see the messaging taking shape. The question is starting to float around boardrooms and LinkedIn threads:

What’s your Open-Claw strategy?

It sounds smart. It sounds forward-looking. It sounds like the kind of question you’re supposed to be asking right now.

But if we’re being honest, it’s also a little premature.

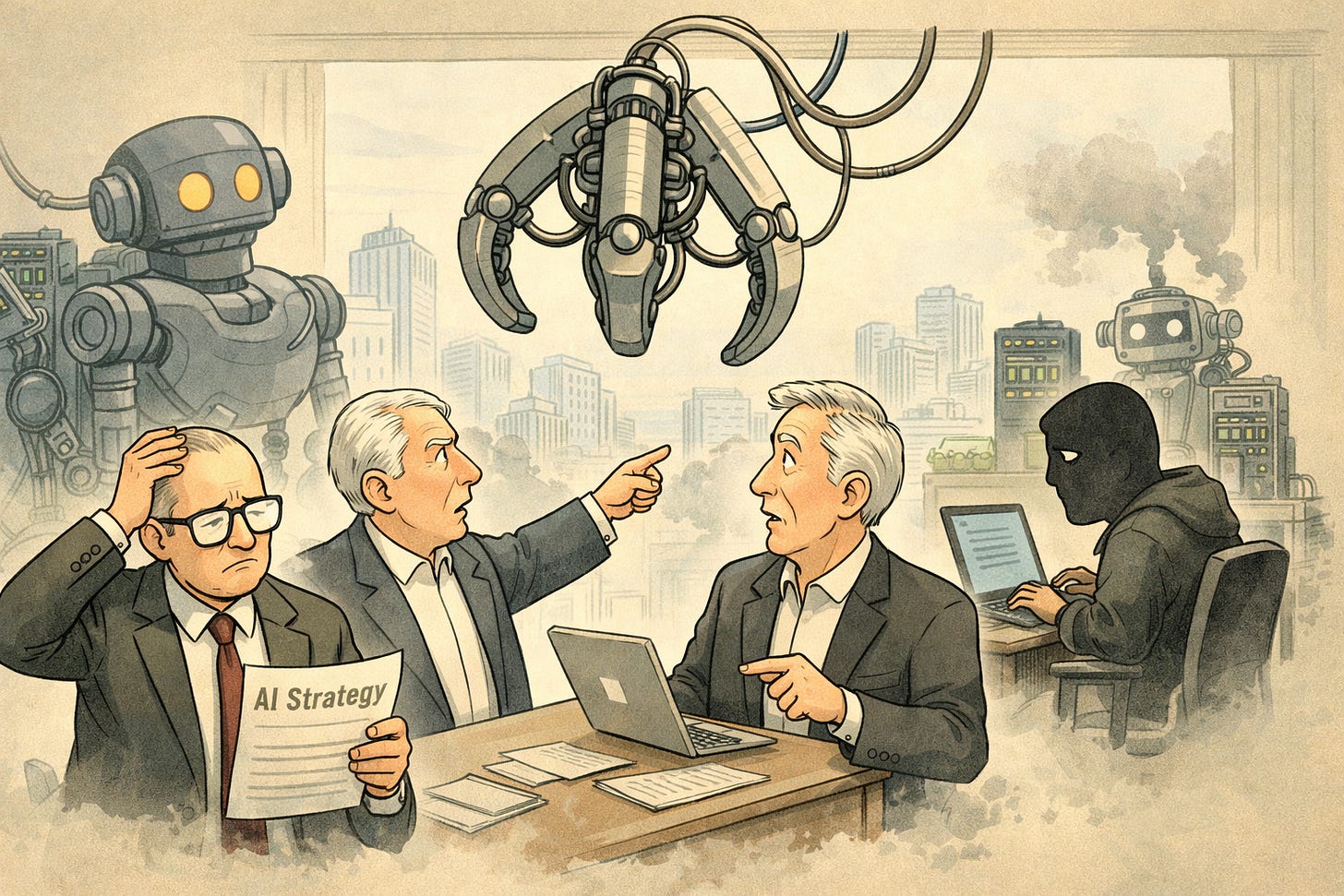

Most companies don’t even have an AI strategy yet. Not a real one.

What they have is urgency. A kind of low-grade panic dressed up as innovation. The strategy, if you strip it down, is basically this: use AI before our competitors do. Hook up an API, wire something into a workflow, ship it, and figure the rest out later.

We’ve seen this movie before. That’s how Shadow IT happened the first time. Departments solving their own problems faster than the organization could govern them. Tools showing up before policies, data moving before anyone understood where it was going.

Now we’re doing it again, except this time the systems aren’t just storing data. They’re making decisions.

So when I hear “what’s your Open-Claw strategy,” the question that immediately comes to mind is something a lot less exciting and a lot more important: what’s your Shadow AI strategy? Or more bluntly, how are you planning to not lose control of your own systems?

Because that’s what’s actually at stake here.

Agents are already spinning up outside of governance. Models are being trained on data nobody has properly tracked. Pipelines are being stitched together by well-meaning teams who just want to move faster. And once those systems start operating with any level of autonomy, you don’t just have sprawl, you have opacity.

At the same time, the push toward localized agentics is very real, and very justified. I’ve been talking about this for a while now, well before the current wave picked up. I even published a case study months back asking people what their OpenCL strategy was going to look like, because it was obvious where this was heading.

When compute moves closer to the edge, when agents start running locally, when orchestration becomes more distributed, the entire model shifts. Security boundaries blur. Observability becomes harder, not easier. Ownership gets fuzzy. You don’t always know where a decision was made, or why.

NVIDIA is right to push in this direction. They’re not wrong about the future. But there’s a difference between being directionally right and being operationally ready. Acceleration without control doesn’t create advantage. It creates mess, just at a higher speed.

Which is why the current failure estimates feel off.

That sounds reasonable until you spend any time actually looking at how these systems are being deployed. In practice, it feels a lot closer to 80 or 90 percent. Maybe higher. Not because the models don’t work, but because everything around the models is still immature.

No clear governance. No consistent lineage. No real enforcement. Limited auditability. Plenty of enthusiasm.

About a year ago, I put that stake in the ground more explicitly in a white paper.

The argument was simple and hasn’t really changed. Most AI projects aren’t going to fail because the model was bad. They’re going to fail because the data wasn’t trusted, the systems weren’t observable, and the organization never had real control in the first place.

AI isn’t a shortcut around those problems. If anything, it amplifies them.

If your data is fragmented, your outputs will be inconsistent. If you don’t have lineage, you don’t have accountability. And if you can’t trace how a decision was made, you can’t defend it, fix it, or even fully understand it.

The industry keeps trying to build this stack from the top down. Start with the model, wrap it in a slick interface, and hope the data layer sorts itself out over time. It rarely does.

That gap is exactly why we built REsolvr.

Not as another model or another assistant, but as a way to bring visibility back into systems that are getting harder to see. To trace decisions, not just outputs. To understand what an agent actually did, not just what it returned.

Because the future we’re heading into isn’t just more AI. It’s more autonomous systems making more decisions with less direct human involvement. And if you don’t have a way to see those decisions clearly, you don’t really have control. You just have activity.

NVIDIA is going to keep pushing this forward. They should. Open-Claw, localized compute, agentic systems, all of it is going to unlock real capability.

But asking companies for their Open-Claw strategy right now feels a bit like asking for their space program while they’re still figuring out electricity.

We’re skipping steps.

The real dividing line over the next few years won’t be who adopted AI first or fastest. It’ll be who understood what their systems were actually doing.

Speed gets attention.

Control wins.

About the Author

Brian P. Christian (B.P. Christian) is a technology founder and security architect focused on AI governance and software supply chain integrity.

He is the Founder and CEO of Flying Cloud Technology, building enterprise data security and provenance platforms for regulated environments. Over two decades, he has founded and led multiple security companies, including SPI Dynamics (acquired by Hewlett-Packard) and Zettaset, delivering security infrastructure at global scale.

Before “agentic AI” was mainstream, he designed the precursor to localized, workflow-embedded AI systems through REzolvrAI and REwerkr. He is also the creator of ForgeProof, an open-source (Apache 2.0) code attestation framework built from Flying Cloud’s chain-of-custody architecture.

He writes on Substack and is completing his forthcoming book, Inferior God Complex: Why We Fear What We Build — and Obey What We Don’t Understand.